Key Takeaway

Eighty percent of enterprise AI initiatives fail to deliver measurable value — not because of flawed algorithms, but because of missing governance, misaligned operating models, and the absence of a business-driven prioritization discipline.

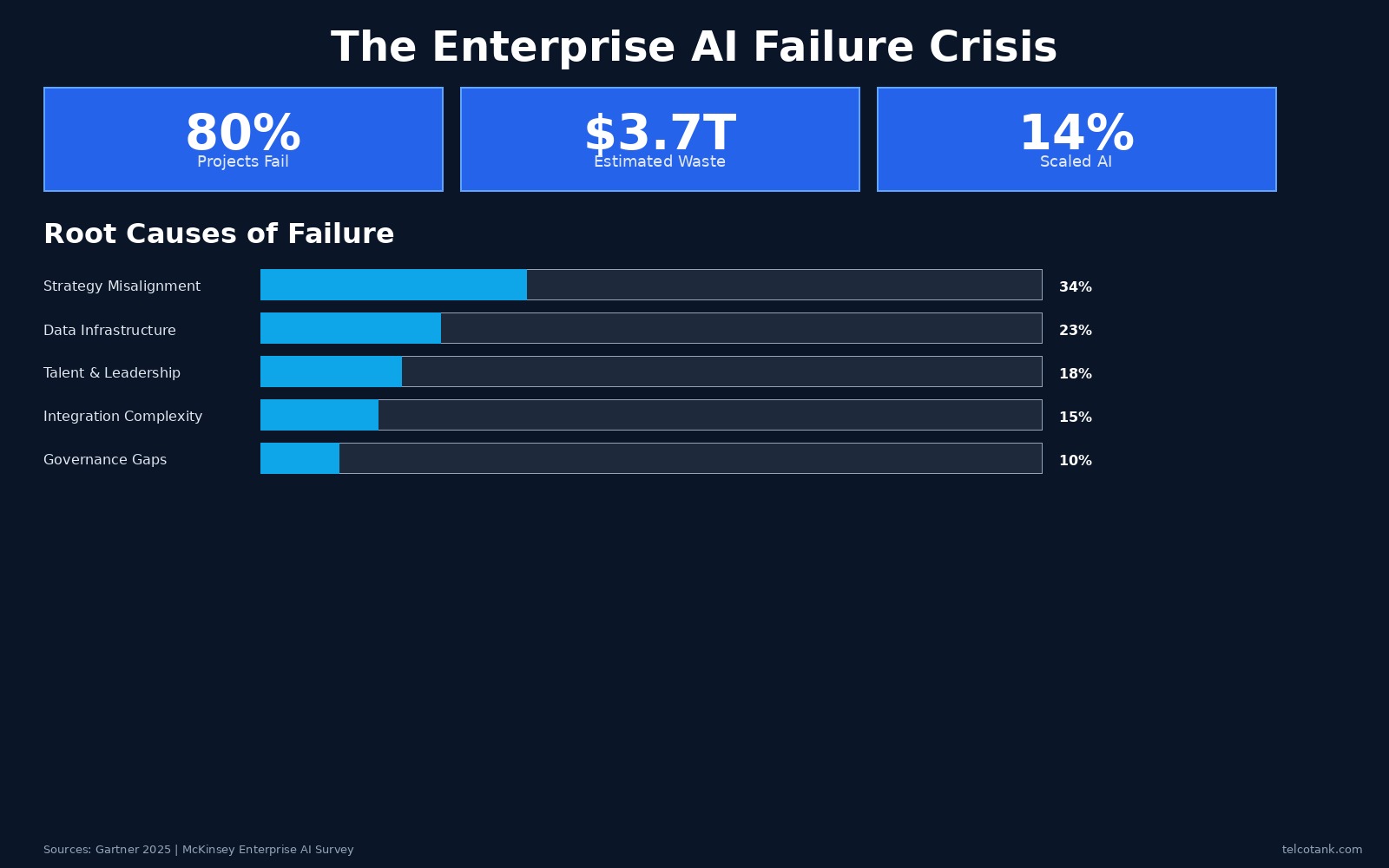

The numbers are staggering and, for most boardrooms, deeply uncomfortable. According to Gartner's 2025 enterprise technology survey, roughly 80 percent of AI initiatives fail to progress beyond the pilot stage. McKinsey's latest Global AI Survey puts the figure of organizations that have successfully scaled AI across multiple business units at just 14 percent. The gap between AI ambition and AI reality is not narrowing — it is widening. And the root cause is not technology. It is the absence of a business-driven approach to AI transformation.

The estimated cost of this failure is extraordinary. Industry analysts project that by 2027, global enterprises will have wasted approximately $3.7 trillion on AI projects that never delivered their promised returns. This is not a technology problem. The models work. The cloud infrastructure is mature. The talent, while scarce, is available. What is missing is the connective tissue between AI capability and business value: governance, operating model design, use-case prioritization, and workforce adoption.

The pattern of failure is remarkably consistent across industries and geographies. An enterprise identifies AI as a strategic priority. A chief data officer or innovation team launches a handful of pilots — often in areas chosen for technical feasibility rather than business impact. The pilots produce encouraging results in controlled environments. Then, when the time comes to scale, the organization hits a wall. There is no governance framework to manage risk. There is no operating model to integrate AI into existing workflows. There is no mechanism to prioritize which use cases deserve investment and which should be killed. The pilots remain pilots. The board grows skeptical. Investment stalls.

The first discipline that separates winning organizations from the rest is rigorous use-case prioritization. Enterprises that succeed at AI do not pursue every opportunity simultaneously. They begin with a systematic inventory — typically generating 40 to 60 potential use cases across business units — and then apply a structured scoring methodology to narrow the field to 8 to 12 priority initiatives. The scoring criteria are relentlessly business-focused: revenue uplift potential, cost reduction estimate, data availability, technical complexity, time to value, and strategic alignment. Use cases are plotted on an impact-versus-feasibility matrix that separates quick wins from strategic bets, efficiency plays from initiatives that should be deprioritized entirely.

This prioritization discipline is more important than any model architecture or technology stack decision. It ensures that the organization's finite resources — capital, talent, executive attention — are concentrated on the initiatives most likely to generate measurable value. It also creates a common language between business leaders and technology teams, replacing abstract AI enthusiasm with concrete investment cases that boards can evaluate and approve.

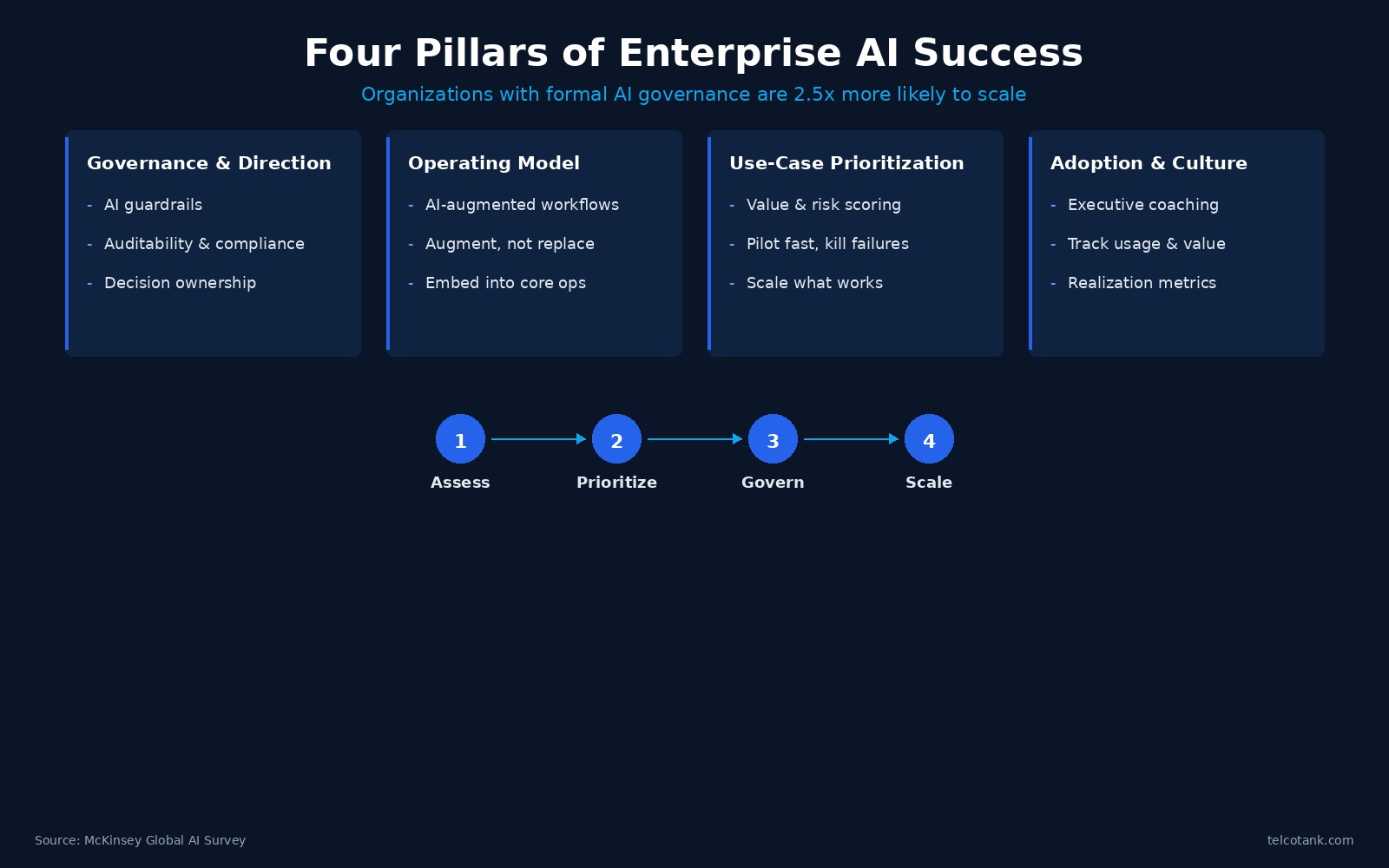

The second critical discipline is governance — and specifically, governance that is designed at the board level, not delegated to IT. The organizations that scale AI successfully establish clear answers to a set of fundamental questions before writing a single line of production code: Who owns AI strategy execution? How are use cases prioritized and approved? What are the ethical red lines? How is AI performance measured and reported to the board? Who governs data access and quality? How is AI risk escalated? What is the build-versus-buy-versus-partner policy? And how is workforce readiness tracked?

These are not technical questions. They are business questions. And the failure to answer them is the single largest source of enterprise AI failure. Research from McKinsey indicates that organizations with formal AI governance frameworks are 2.5 times more likely to scale AI successfully than those without. The reason is straightforward: governance provides the guardrails that allow speed. Without it, every AI initiative becomes a bespoke negotiation over risk, compliance, data access, and accountability — a negotiation that most organizations resolve by doing nothing.

The third discipline is operating model design. AI does not succeed when it is layered on top of existing processes as an afterthought. It succeeds when workflows are redesigned to be AI-augmented — when the operating model explicitly defines how human decision-making and AI capability interact. This means establishing an AI control function that manages the intake, development, and scaling of use cases through a structured lifecycle. It means defining clear roles: who commissions AI work, who builds it, who validates it, who owns it in production, and who measures its value. It means creating sprint-based delivery cycles — typically three to four weeks — that allow rapid iteration, fast failure, and disciplined scaling of what works.

The most effective operating models treat AI as a portfolio, not a project. They establish a use-case factory — a repeatable process for moving initiatives from ideation through piloting to enterprise-wide deployment. Each stage has defined quality gates, value measurement KPIs, and clear accountability. The model is designed to kill underperforming initiatives as rigorously as it scales successful ones. This portfolio discipline is what prevents the all-too-common pattern of AI sprawl, where dozens of disconnected experiments consume resources without ever reaching the scale required to generate meaningful returns.

The fourth discipline — and arguably the most underestimated — is adoption and culture. The technical literature on AI is vast. The literature on getting organizations to actually use AI is thin. Yet workforce adoption is the variable that determines whether a technically successful AI implementation delivers business value or sits unused. Winning organizations invest as heavily in change management, executive coaching, and workforce enablement as they do in model development. They track not just model accuracy but usage rates, quality of human-AI interaction, and value realization at the business-unit level.

The board's role in all of this cannot be overstated. AI transformation is not a technology initiative that can be delegated to IT and reviewed quarterly. It is a strategic transformation that requires active board engagement on governance, risk, investment prioritization, and value measurement. The most successful enterprises establish a direct line of accountability from AI initiatives to the board — with regular reporting on use-case progress, value realization against targets, risk exposure, and workforce readiness. This level of board engagement creates the organizational gravity that pulls AI out of the pilot phase and into the operational core of the enterprise.

The phased approach matters as well. Organizations that try to do everything at once — governance, operating model, use-case development, and cultural change simultaneously across the entire enterprise — almost invariably fail. The disciplined alternative is a two-phase model. The first phase focuses on direction and readiness: assessing the organization's AI maturity across strategy, data, talent, technology, governance, and culture; conducting structured use-case workshops with business unit leaders; designing the governance framework and target operating model; and producing a prioritized roadmap that the board can evaluate with a clear build, pause, or stop decision. Only after this foundation is in place does the second phase begin: embedded delivery of prioritized use cases through sprint-based cycles, with continuous measurement and value realization.

The data supports this phased, business-driven approach. Organizations that invest in readiness assessment and governance design before launching AI development report 60 percent faster time to measurable value, 45 percent higher workforce adoption rates, and significantly lower regulatory and reputational risk. The reason is not mysterious: they know what to build, they know who is accountable, they have the guardrails to move fast, and they have the organizational buy-in to sustain the effort through the inevitable setbacks that accompany any enterprise transformation.

The strategic imperative is clear. AI is not optional for enterprises that intend to remain competitive. But the path to value is not through more pilots, more models, or more technology investment. It is through the hard, unglamorous work of governance, operating model design, use-case prioritization, and workforce adoption. The organizations that master these disciplines will capture the extraordinary value that AI promises. Those that continue to treat AI as a technology problem will continue to join the 80 percent that fail.

For more articles visit our website: telcotank.com

Hakan Dulge

Founder & Managing Director, Telcotank. 20+ years in telecom transformation, AI strategy, and digital infrastructure advisory.