Key Takeaway

The enterprise AI failure rate is accelerating, not improving. While organizations pour billions into AI initiatives, the vast majority will never move past pilot stage. The difference between the 80% that fail and the 20% that succeed comes down to one thing: strategy before technology.

Here is a number that should alarm every board of directors in the Fortune 500: by 2027, roughly 80% of enterprise AI projects will have failed to deliver meaningful business outcomes. Not underperformed. Not delayed. Failed. Shuttered, deprioritized, or quietly absorbed into IT budgets where they generate reports no one reads. This is not a prediction we make lightly. It is the convergence of data from Gartner, McKinsey, Forrester, and our own advisory work across telecom, financial services, and industrial enterprises over the past four years.

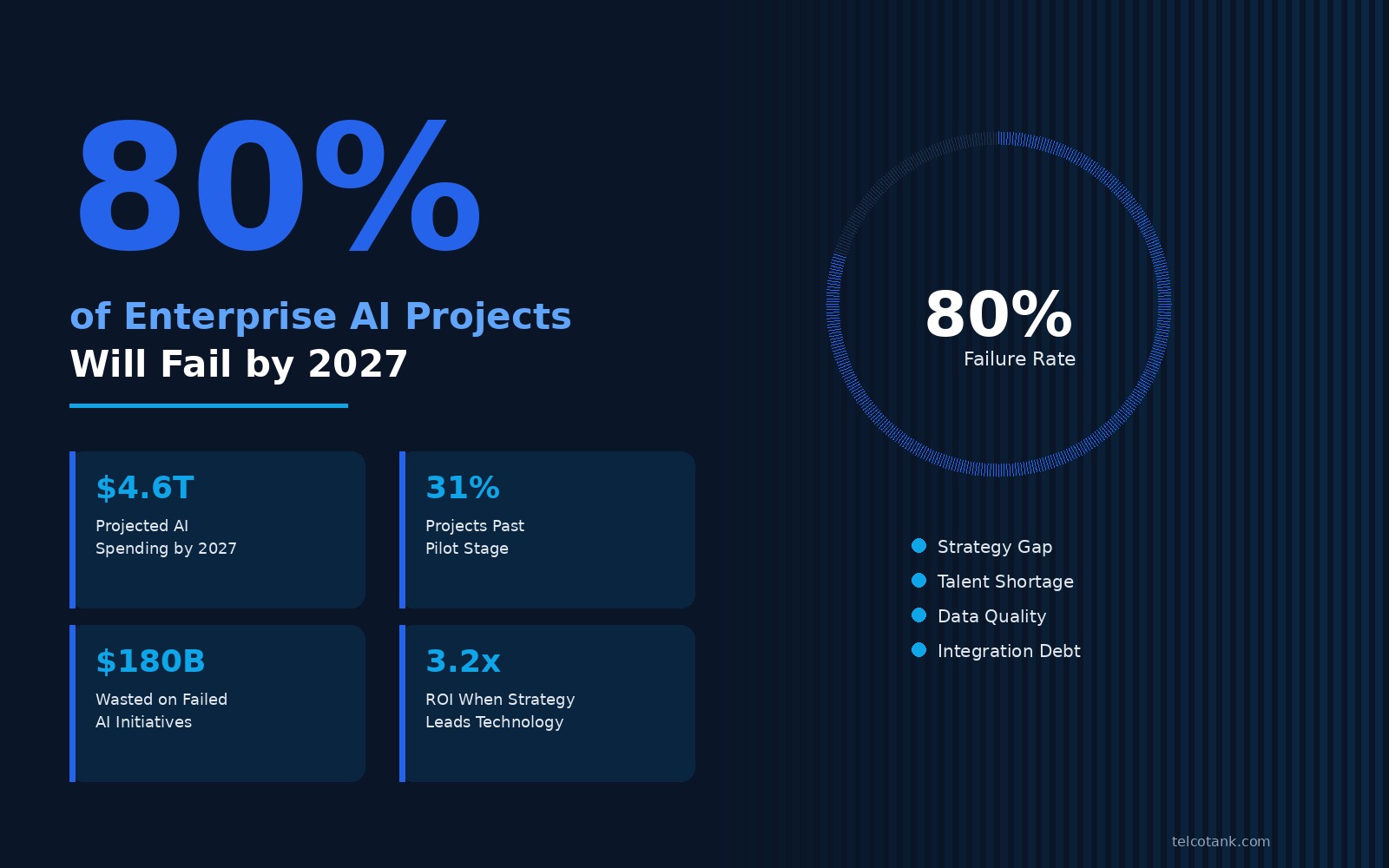

The scale of investment makes the failure rate even more striking. Global enterprise AI spending is projected to reach $4.6 trillion by 2027, according to IDC's latest forecast. That means approximately $3.7 trillion will be spent on initiatives that deliver no measurable return. To put that in perspective, the entire GDP of Germany is roughly $4.2 trillion. We are on track to waste more money on failed AI projects than the annual economic output of Europe's largest economy.

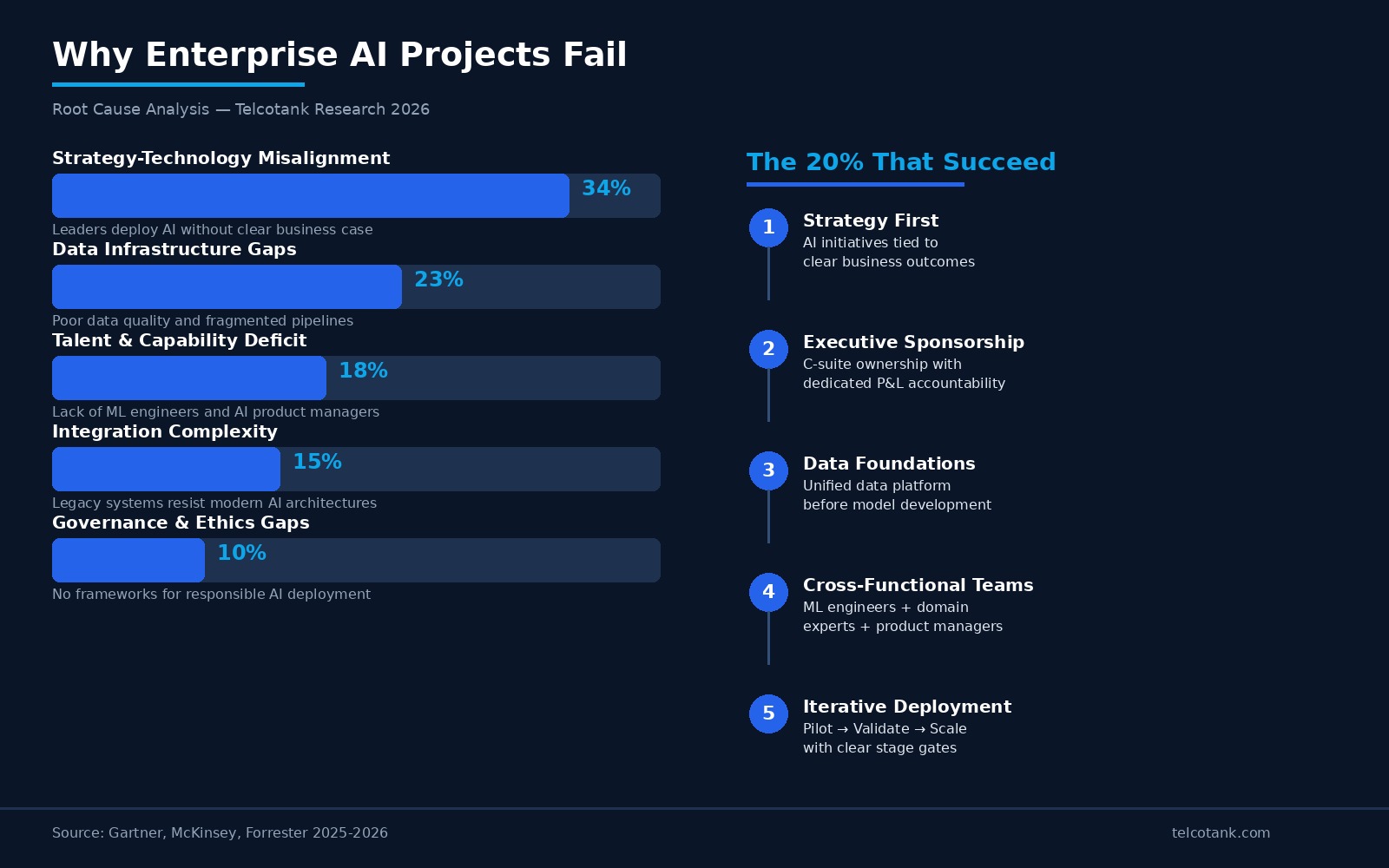

Why are so many AI projects failing? The answer is not technical complexity, although that plays a role. The primary failure mode — responsible for roughly 34% of all enterprise AI project failures — is what we call strategy-technology misalignment. Organizations deploy AI because they feel they should, not because they have identified a specific business problem that AI uniquely solves. The result is what we have seen repeatedly in our advisory engagements: beautifully architected AI systems that solve problems no executive actually cares about.

A European Tier-1 telecom operator we worked with in 2024 spent $45 million building an AI-powered network optimization platform. The technical execution was flawless. The models were accurate. The dashboard was elegant. Six months after launch, utilization was below 8%. Why? Because the network operations team had already optimized the same processes manually over two decades. The AI system was marginally better — perhaps 3-4% improvement in key metrics — but the organizational disruption required to adopt it far exceeded the incremental value. That is a $45 million lesson in what happens when technology leads strategy.

The second largest failure driver, accounting for 23% of failed projects, is data infrastructure. Most enterprises do not have the data foundations required for production AI. Their data lives in silos — different formats, different owners, different quality standards. Building an AI model on fragmented data is like constructing a skyscraper on sand. The model might work in the lab, but it collapses under real-world conditions. McKinsey's 2025 AI implementation survey found that 67% of enterprises that abandoned AI projects cited data quality as the primary reason, not model performance.

Talent gaps account for another 18% of failures. The global shortage of ML engineers, data scientists, and AI product managers is well documented. But the more insidious talent gap is at the leadership level. Most organizations lack executives who understand both the technical capabilities of AI and the business model implications. Without that bridge, AI teams build impressive technology that never connects to revenue, and business teams set expectations that no AI system could realistically meet. The result is mutual frustration and, inevitably, project cancellation.

Integration complexity and governance gaps round out the remaining failure categories at 15% and 10% respectively. Legacy enterprise systems — particularly in telecom, banking, and manufacturing — were never designed for real-time AI inference. Retrofitting AI into architectures built in the 2000s is expensive, slow, and fragile. Meanwhile, the absence of clear AI governance frameworks means that even successful pilots stall when legal, compliance, or ethics teams raise concerns that no one anticipated during the proof-of-concept phase.

So what are the 20% doing differently? After analyzing over 140 enterprise AI deployments across our client base, we have identified five patterns that distinguish successful AI programs from the 80% that fail. The first and most critical: they start with a business problem, not a technology solution. Successful AI programs begin with a clearly articulated business outcome — increase B2B revenue by 15%, reduce customer churn by 20%, cut supply chain costs by $50 million — and then ask whether AI is the right tool to achieve it. Sometimes it is not. And the willingness to say "AI is not the answer here" is itself a marker of strategic maturity.

The second pattern is executive sponsorship with teeth. Not a CTO who champions the initiative at quarterly reviews, but a C-suite sponsor who owns the P&L outcome. In every successful AI deployment we have studied, there is a named executive whose compensation is tied to the AI program's business results. That changes everything — from resource allocation to organizational priority to the speed of decision-making when obstacles arise.

Third, successful programs invest heavily in data foundations before building models. They spend 60-70% of their AI budget on data infrastructure — unified data platforms, data quality frameworks, real-time pipelines — and only 30-40% on the AI models themselves. Failed programs invert this ratio, spending most of their budget on sophisticated models that sit on top of unreliable data.

Fourth, they build cross-functional teams from day one. Not AI teams that occasionally brief business stakeholders, but integrated squads with ML engineers, domain experts, product managers, and change management professionals working together continuously. The organizational design matters as much as the technical architecture.

Fifth, they deploy iteratively with clear stage gates. Pilot, validate business impact, then scale — with explicit go/no-go criteria at each stage. Failed programs try to build enterprise-wide AI platforms from scratch. Successful programs start with one use case, prove value, and expand systematically. The compound effect of this approach is remarkable: organizations that follow iterative deployment achieve 3.2x higher ROI than those pursuing big-bang implementations.

The implications for enterprise leaders in 2026 and 2027 are clear. The AI investment wave is not slowing down — if anything, the pressure to deploy AI is intensifying as competitors and boards demand results. But throwing more money at AI without fixing the underlying strategic, organizational, and data issues will only accelerate the failure rate. The organizations that will capture disproportionate value from AI are the ones that treat it as a business transformation, not a technology project. They invest in strategy before algorithms, people before platforms, and data before models.

We have spent four years watching billions of dollars flow into AI initiatives that were doomed from the start. Not because the technology was wrong, but because the approach was. The 80% failure rate is not inevitable. It is the predictable consequence of deploying technology without strategy. The 20% that succeed prove that enterprise AI works — when it is done right. The question for every CEO and board member reading this is simple: which side of that divide will your organization be on?

For more articles visit our website: telcotank.com

Hakan Dulge

Founder & Managing Director, Telcotank. 20+ years in telecom transformation, AI strategy, and digital infrastructure advisory.