Key Takeaway

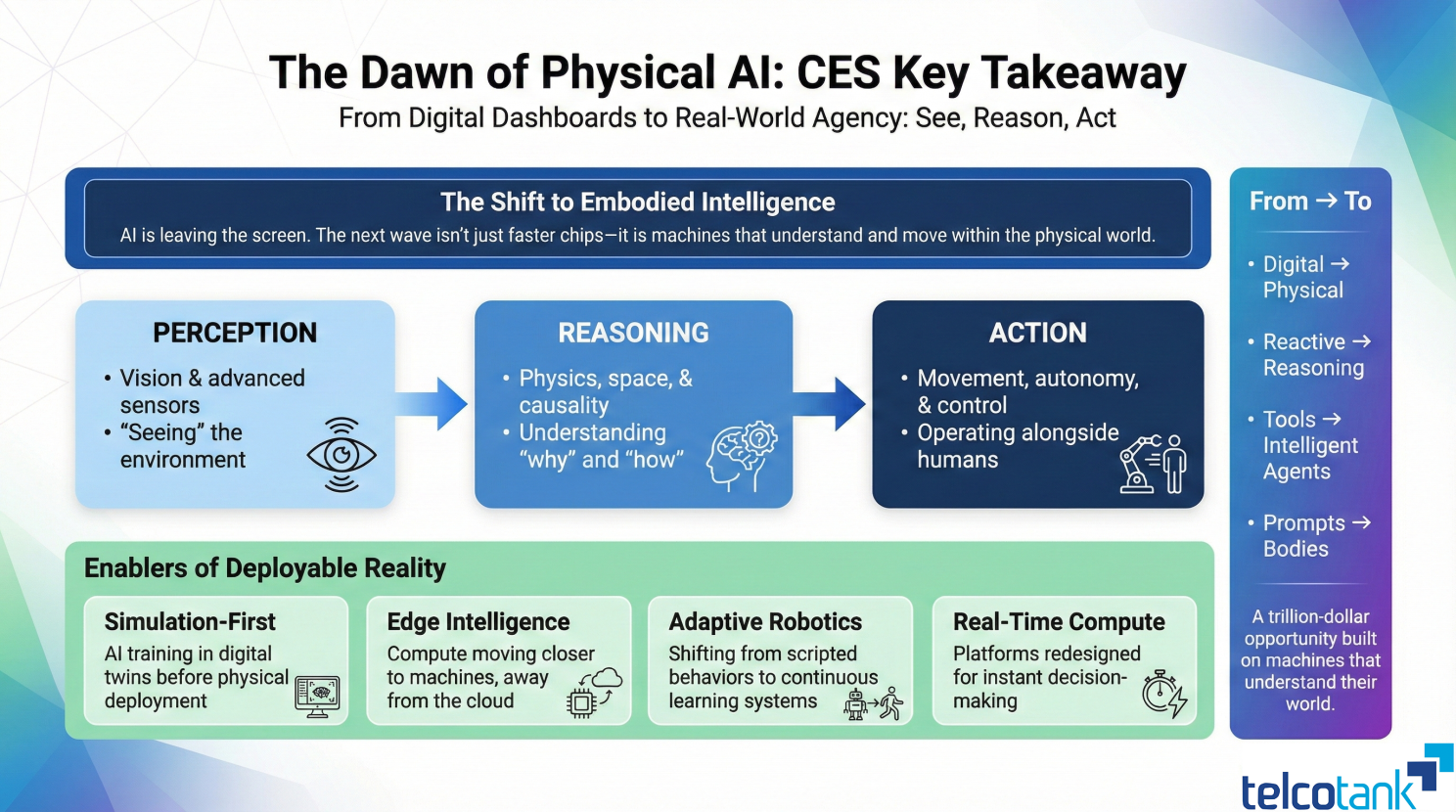

The emergence of Physical AI — integrating perception, reasoning, and autonomous action — represents a structural shift from digital-first to embodied intelligence systems, with deployment applications across robotics, autonomous vehicles, and industrial automation.

For years, AI has lived inside apps, dashboards, and chat windows. At CES, that changed.

The defining theme wasn't another model or faster chip: it was Physical AI.

Led by NVIDIA, the industry made something clear: The next wave of AI must see, reason, and act in the real world.

Physical AI combines three fundamental capabilities: perception through vision and sensors, reasoning about physics, space, and causality, and action through movement, autonomy, and control.

This is the foundation for robots, autonomous vehicles, intelligent machines, and industrial systems — not demos, but deployable reality.

What stood out most wasn't just robotics or autonomy in isolation. It was how everything converged: simulation-first AI training, edge intelligence moving closer to machines, robotics shifting from scripted behavior to learning systems, and compute platforms redesigned for real-time decision-making.

AI is no longer just assisting humans. It's beginning to operate alongside us.

CES felt different because it signaled a structural shift: from digital to physical, from reactive to reasoning, from tools to intelligent agents with bodies.

The trillion-dollar opportunity ahead won't be built on prompts alone. It will be built on machines that understand the world they move in.

This is the beginning of the Physical AI era.

And the question for leaders is no longer if this changes your industry — but how fast you adapt.

Hakan Dulge

Founder & Managing Director, Telcotank. 20+ years in telecom transformation, AI strategy, and digital infrastructure advisory.